Joachim Breitner: Don t think, just defunctionalize

TL;DR: CPS-conversion and defunctionalization can help you to come up with a constant-stack algorithm.

Update: Turns out I inadvertedly plagiarized the talk The Best Refactoring You ve Never Heard Of by James Koppel. Please consider this a form of sincere flattery.

TL;DR: CPS-conversion and defunctionalization can help you to come up with a constant-stack algorithm.

Update: Turns out I inadvertedly plagiarized the talk The Best Refactoring You ve Never Heard Of by James Koppel. Please consider this a form of sincere flattery.

The starting point

Today, I ll take you on a another little walk through the land of program transformations. Let s begin with a simple binary tree, with value of unknown type in the leaves, as well as the canonical map function:

data T a = L a B (T a) (T a)

map1 :: (a -> b) -> T a -> T b

map1 f (L x) = L (f x)

map1 f (B t1 t2) = B (map1 f t1) (map1 f t2)

As you can see, this map function is using the program stack as it traverses the tree. Our goal is now to come up with a map function that does not use the stack!

Why? Good question! In Haskell, there wouldn t be a strong need for this, as the Haskell stack is allocated on the heap, just like your normal data, so there is plenty of stack space. But in other languages or environments, the stack space may have a hard limit, and it may be advised to not use unbounded stack space.

That aside, it s a fun exercise, and that s sufficient reason for me.

(In the following, I assume that tail-calls, i.e. those where a function end with another function call, but without modifying its result, do not actually use stack space. Once all recursive function calls are tail calls, the code is equivalent to an imperative loop, as we will see.)

Think?

We could now just stare at the problem (rather the code), and try to come up with a solution directly. We d probably think ok, as I go through the tree, I have to remember all the nodes above me so I need a list of those nodes and for each of these nodes, I also need to remember whether I am currently processing the left child, and yet have to look at the right one, or whether I am done with the left child so what do I have to remember about the current node ?

ah, my brain spins already. Maybe eventually I figure it out, but why think when we can derive the solution? So let s start with above map1, and rewrite it, in several, mechanical, steps into a stack-less, tail-recursive solution.

Go!

Before we set out, let me rewrite the map function using a local go helper, as follows:

map2 :: forall a b. (a -> b) -> T a -> T b

map2 f t = go t

where

go :: T a -> T b

go (L x) = L (f x)

go (B t1 t2) = B (go t1) (go t2)

This transformation (effectively the static argument transformation ) has the nice advantage that we do not have to pass f around all the time, and that when we copy the function, I only have to change the top-level name, but not the names of the inner functions.

Also, I find it more aesthetically pleasing.

CPS

A blunt, effective tool to turn code that is not yet using tail-calls into code that only uses tail-calls is use continuation-passing style. If we have a function of type -> t, we turn it into a function of type -> (t -> r) -> r, where r is the type of the result we want at the very end. This means the function now receives an extra argument, often named k for continuation, and instead of returning some x, the function calls k x.

We can apply this to our go function. Here, both t and r happen to be T b; the type of finished trees:

map3 :: forall a b. (a -> b) -> T a -> T b

map3 f t = go t (\r -> r)

where

go :: T a -> (T b -> T b) -> T b

go (L x) k = k (L (f x))

go (B t1 t2) k = go t1 (\r1 -> go t2 (\r2 -> k (B r1 r2)))

Note that when initially call go, we pass the identity function (\r -> r) as the initial continuation.

Alas, suddenly all function calls are in tail position, and this codes does not use stack space! Technically, we are done, although it is not quite satisfying; all these lambdas floating around obscure the meaning of the code, are maybe a bit slow to execute, and also, we didn t really learn much yet. This is certainly not the code we would have writing after thinking hard .

Defunctionalization

So let s continue rewriting the code to something prettier, simpler. Something that does not use lambdas like this.

Again, there is a mechanical technique that can help it. It likely won't make the code prettier, but it will get rid of the lambdas, so let s do that an clean up later.

The technique is called defunctionalization (because it replaces functional values by plain data values), and can be seen as a form of refinement.

Note that we pass around vales of type (T b -> T b), but we certainly don t mean the full type (T b -> T b). Instead, only very specific values of that type occur in our program, So let us replace (T b -> T b) with a data type that contains representatives of just the values we actually use.

- We find at all values of type

(T b -> T b). These are:

(\r -> r)(\r1 -> go t2 (\r2 -> k (B r1 r2)))(\r2 -> k (B r1 r2))

- We create a datatype with one constructor for each of these:

data K = I K1 K2

(This is not complete yet.)

- We introduce an interpretation function that turns a

K back into a (T b -> T b):

eval :: K -> (T b -> T b)

eval = (* TBD *)

- In the function

go, instead of taking a parameter of type (T b -> T b), we take a K. And when we actually use the continuation, we have to turn the K back to the function using eval:

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 K1

We also do this to the code fragments identified in the first step; these become:

(\r -> r)(\r1 -> go t2 K2)(\r2 -> eval k (B r1 r2))

- Now we complete the

eval function: For each constructor, we simply map it to the corresponding lambda from step 1:

eval :: K -> (T b -> T b)

eval I = (\r -> r)

eval K1 = (\r1 -> go t2 K2)

eval K2 = (\r2 -> eval k (B r1 r2))

- This doesn t quite work yet: We have variables on the right hand side that are not bound (

t2, r1, k). So let s add them to the constructors K1 and K2 as needed. This also changes the type K itself; it now needs to take type parameters.

This leads us to the following code:

data K a b

= I

K1 (T a) (K a b)

K2 (T b) (K a b)

map4 :: forall a b. (a -> b) -> T a -> T b

map4 f t = go t I

where

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 (K1 t2 k)

eval :: K a b -> (T b -> T b)

eval I = (\r -> r)

eval (K1 t2 k) = (\r1 -> go t2 (K2 r1 k))

eval (K2 r1 k) = (\r2 -> eval k (B r1 r2))

Not really cleaner or prettier, but everything is still tail-recursive, and we are now working with plain data.

We like lists

To clean it up a little bit, we can notice that the K data type really is just a list of values, where the values are either T a or T b. We do not need a custom data type for this! Instead of our K, we can just use the following, built from standard data types:

type K' a b = [Either (T a) (T b)]

Now I replace I with [], K1 t2 k with Left t2 : k and K2 r1 k with Right r1 : k. I also, very suggestively, rename go to down and eval to up:

map5 :: forall a b. (a -> b) -> T a -> T b

map5 f t = down t []

where

down :: T a -> K' a b -> T b

down (L x) k = up k (L (f x))

down (B t1 t2) k = down t1 (Left t2 : k)

up :: K' a b -> T b -> T b

up [] r = r

up (Left t2 : k) r1 = down t2 (Right r1 : k)

up (Right r1 : k) r2 = up k (B r1 r2)

At this point, the code suddenly makes more sense again. In fact, I can try to verbalize it:

As we traverse the tree, we have to remember for all parent nodes, whether there is still something Left to do when we come back to it (so we remember a T a), or if we are done with that (so we have a T b). This is the list K' a b.

We begin to go down the left of the tree (noting that the right siblings are still left to do), until we hit a leaf. We transform the leaf, and then go up.

If we go up and hit the root, we are done. Else, if we go up and there is something Left to do, we remember the subtree that we just processed (as that is already in the Right form), and go down the other subtree. But if we go up and there is nothing Left to do, we put the two subtrees together and continue going up.

Quite neat!

The imperative loop

At this point we could stop: the code is pretty, makes sense, and has the properties we want. But let s turn the dial a bit further and try to make it an imperative loop.

We know that if we have a single tail-recursive function, then that s equivalent to a loop, with the function s parameter turning into mutable variables. But we have two functions!

It turns out that if you have two functions a -> r and b -> r that have the same return type (which they necessarily have here, since we CPS-converted them further up), then those two functions are equivalent to a single function taking a or b , i.e. Either a b -> r. This really nothing else than the high-school level algebra rule of ra rb = ra + b.

So (after reordering the arguments of down to put T b first) we can rewrite the code as

map6 :: forall a b. (a -> b) -> T a -> T b

map6 f t = go (Left t) []

where

go :: Either (T a) (T b) -> K' a b -> T b

go (Left (L x)) k = go (Right (L (f x))) k

go (Left (B t1 t2)) k = go (Left t1) (Left t2 : k)

go (Right r) [] = r

go (Right r1) (Left t2 : k) = go (Left t2) (Right r1 : k)

go (Right r2) (Right r1 : k) = go (Right (B r1 r2)) k

Do you see the loop yet? If not, maybe it helps to compare it with the following equivalent imperative looking pseudo-code:

mapLoop :: forall a b. (a -> b) -> T a -> T b

mapLoop f t

var node = Left t;

var parents = [];

while (true)

switch (node)

Left (L x) -> node := Right (L (f x))

Left (B t1 t2) -> node := Left t1; parents.push(Left t2)

Right r1 ->

if (parents.len() == 0)

return r1;

else

switch (parents.pop())

Left t2 -> node := Left t2; parents.push(Right r1);

Right r2 -> node := Right (B r1 r2)

Conclusion

I find it enlightening to see how apparently very different approaches to a problem (recursive, lazy functions and imperative loops) are connected by a series of rather mechanical transformations. When refactoring code, it is helpful to see if one can conceptualize the refactoring as one of those mechanical steps (refinement, type equivalences, defunctionalization, cps conversion etc.)

If you liked this post, you might enjoy my talk The many faces of isOrderedTree, which I have presented at MuniHac 2019 and Haskell Love 2020.

data T a = L a B (T a) (T a)

map1 :: (a -> b) -> T a -> T b

map1 f (L x) = L (f x)

map1 f (B t1 t2) = B (map1 f t1) (map1 f t2)map1, and rewrite it, in several, mechanical, steps into a stack-less, tail-recursive solution.

Go!

Before we set out, let me rewrite the map function using a local go helper, as follows:

map2 :: forall a b. (a -> b) -> T a -> T b

map2 f t = go t

where

go :: T a -> T b

go (L x) = L (f x)

go (B t1 t2) = B (go t1) (go t2)

This transformation (effectively the static argument transformation ) has the nice advantage that we do not have to pass f around all the time, and that when we copy the function, I only have to change the top-level name, but not the names of the inner functions.

Also, I find it more aesthetically pleasing.

CPS

A blunt, effective tool to turn code that is not yet using tail-calls into code that only uses tail-calls is use continuation-passing style. If we have a function of type -> t, we turn it into a function of type -> (t -> r) -> r, where r is the type of the result we want at the very end. This means the function now receives an extra argument, often named k for continuation, and instead of returning some x, the function calls k x.

We can apply this to our go function. Here, both t and r happen to be T b; the type of finished trees:

map3 :: forall a b. (a -> b) -> T a -> T b

map3 f t = go t (\r -> r)

where

go :: T a -> (T b -> T b) -> T b

go (L x) k = k (L (f x))

go (B t1 t2) k = go t1 (\r1 -> go t2 (\r2 -> k (B r1 r2)))

Note that when initially call go, we pass the identity function (\r -> r) as the initial continuation.

Alas, suddenly all function calls are in tail position, and this codes does not use stack space! Technically, we are done, although it is not quite satisfying; all these lambdas floating around obscure the meaning of the code, are maybe a bit slow to execute, and also, we didn t really learn much yet. This is certainly not the code we would have writing after thinking hard .

Defunctionalization

So let s continue rewriting the code to something prettier, simpler. Something that does not use lambdas like this.

Again, there is a mechanical technique that can help it. It likely won't make the code prettier, but it will get rid of the lambdas, so let s do that an clean up later.

The technique is called defunctionalization (because it replaces functional values by plain data values), and can be seen as a form of refinement.

Note that we pass around vales of type (T b -> T b), but we certainly don t mean the full type (T b -> T b). Instead, only very specific values of that type occur in our program, So let us replace (T b -> T b) with a data type that contains representatives of just the values we actually use.

- We find at all values of type

(T b -> T b). These are:

(\r -> r)(\r1 -> go t2 (\r2 -> k (B r1 r2)))(\r2 -> k (B r1 r2))

- We create a datatype with one constructor for each of these:

data K = I K1 K2

(This is not complete yet.)

- We introduce an interpretation function that turns a

K back into a (T b -> T b):

eval :: K -> (T b -> T b)

eval = (* TBD *)

- In the function

go, instead of taking a parameter of type (T b -> T b), we take a K. And when we actually use the continuation, we have to turn the K back to the function using eval:

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 K1

We also do this to the code fragments identified in the first step; these become:

(\r -> r)(\r1 -> go t2 K2)(\r2 -> eval k (B r1 r2))

- Now we complete the

eval function: For each constructor, we simply map it to the corresponding lambda from step 1:

eval :: K -> (T b -> T b)

eval I = (\r -> r)

eval K1 = (\r1 -> go t2 K2)

eval K2 = (\r2 -> eval k (B r1 r2))

- This doesn t quite work yet: We have variables on the right hand side that are not bound (

t2, r1, k). So let s add them to the constructors K1 and K2 as needed. This also changes the type K itself; it now needs to take type parameters.

This leads us to the following code:

data K a b

= I

K1 (T a) (K a b)

K2 (T b) (K a b)

map4 :: forall a b. (a -> b) -> T a -> T b

map4 f t = go t I

where

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 (K1 t2 k)

eval :: K a b -> (T b -> T b)

eval I = (\r -> r)

eval (K1 t2 k) = (\r1 -> go t2 (K2 r1 k))

eval (K2 r1 k) = (\r2 -> eval k (B r1 r2))

Not really cleaner or prettier, but everything is still tail-recursive, and we are now working with plain data.

We like lists

To clean it up a little bit, we can notice that the K data type really is just a list of values, where the values are either T a or T b. We do not need a custom data type for this! Instead of our K, we can just use the following, built from standard data types:

type K' a b = [Either (T a) (T b)]

Now I replace I with [], K1 t2 k with Left t2 : k and K2 r1 k with Right r1 : k. I also, very suggestively, rename go to down and eval to up:

map5 :: forall a b. (a -> b) -> T a -> T b

map5 f t = down t []

where

down :: T a -> K' a b -> T b

down (L x) k = up k (L (f x))

down (B t1 t2) k = down t1 (Left t2 : k)

up :: K' a b -> T b -> T b

up [] r = r

up (Left t2 : k) r1 = down t2 (Right r1 : k)

up (Right r1 : k) r2 = up k (B r1 r2)

At this point, the code suddenly makes more sense again. In fact, I can try to verbalize it:

As we traverse the tree, we have to remember for all parent nodes, whether there is still something Left to do when we come back to it (so we remember a T a), or if we are done with that (so we have a T b). This is the list K' a b.

We begin to go down the left of the tree (noting that the right siblings are still left to do), until we hit a leaf. We transform the leaf, and then go up.

If we go up and hit the root, we are done. Else, if we go up and there is something Left to do, we remember the subtree that we just processed (as that is already in the Right form), and go down the other subtree. But if we go up and there is nothing Left to do, we put the two subtrees together and continue going up.

Quite neat!

The imperative loop

At this point we could stop: the code is pretty, makes sense, and has the properties we want. But let s turn the dial a bit further and try to make it an imperative loop.

We know that if we have a single tail-recursive function, then that s equivalent to a loop, with the function s parameter turning into mutable variables. But we have two functions!

It turns out that if you have two functions a -> r and b -> r that have the same return type (which they necessarily have here, since we CPS-converted them further up), then those two functions are equivalent to a single function taking a or b , i.e. Either a b -> r. This really nothing else than the high-school level algebra rule of ra rb = ra + b.

So (after reordering the arguments of down to put T b first) we can rewrite the code as

map6 :: forall a b. (a -> b) -> T a -> T b

map6 f t = go (Left t) []

where

go :: Either (T a) (T b) -> K' a b -> T b

go (Left (L x)) k = go (Right (L (f x))) k

go (Left (B t1 t2)) k = go (Left t1) (Left t2 : k)

go (Right r) [] = r

go (Right r1) (Left t2 : k) = go (Left t2) (Right r1 : k)

go (Right r2) (Right r1 : k) = go (Right (B r1 r2)) k

Do you see the loop yet? If not, maybe it helps to compare it with the following equivalent imperative looking pseudo-code:

mapLoop :: forall a b. (a -> b) -> T a -> T b

mapLoop f t

var node = Left t;

var parents = [];

while (true)

switch (node)

Left (L x) -> node := Right (L (f x))

Left (B t1 t2) -> node := Left t1; parents.push(Left t2)

Right r1 ->

if (parents.len() == 0)

return r1;

else

switch (parents.pop())

Left t2 -> node := Left t2; parents.push(Right r1);

Right r2 -> node := Right (B r1 r2)

Conclusion

I find it enlightening to see how apparently very different approaches to a problem (recursive, lazy functions and imperative loops) are connected by a series of rather mechanical transformations. When refactoring code, it is helpful to see if one can conceptualize the refactoring as one of those mechanical steps (refinement, type equivalences, defunctionalization, cps conversion etc.)

If you liked this post, you might enjoy my talk The many faces of isOrderedTree, which I have presented at MuniHac 2019 and Haskell Love 2020.

map2 :: forall a b. (a -> b) -> T a -> T b

map2 f t = go t

where

go :: T a -> T b

go (L x) = L (f x)

go (B t1 t2) = B (go t1) (go t2) -> t, we turn it into a function of type -> (t -> r) -> r, where r is the type of the result we want at the very end. This means the function now receives an extra argument, often named k for continuation, and instead of returning some x, the function calls k x.

We can apply this to our go function. Here, both t and r happen to be T b; the type of finished trees:

map3 :: forall a b. (a -> b) -> T a -> T b

map3 f t = go t (\r -> r)

where

go :: T a -> (T b -> T b) -> T b

go (L x) k = k (L (f x))

go (B t1 t2) k = go t1 (\r1 -> go t2 (\r2 -> k (B r1 r2)))go, we pass the identity function (\r -> r) as the initial continuation.

Alas, suddenly all function calls are in tail position, and this codes does not use stack space! Technically, we are done, although it is not quite satisfying; all these lambdas floating around obscure the meaning of the code, are maybe a bit slow to execute, and also, we didn t really learn much yet. This is certainly not the code we would have writing after thinking hard .

Defunctionalization

So let s continue rewriting the code to something prettier, simpler. Something that does not use lambdas like this.

Again, there is a mechanical technique that can help it. It likely won't make the code prettier, but it will get rid of the lambdas, so let s do that an clean up later.

The technique is called defunctionalization (because it replaces functional values by plain data values), and can be seen as a form of refinement.

Note that we pass around vales of type (T b -> T b), but we certainly don t mean the full type (T b -> T b). Instead, only very specific values of that type occur in our program, So let us replace (T b -> T b) with a data type that contains representatives of just the values we actually use.

- We find at all values of type

(T b -> T b). These are:

(\r -> r)(\r1 -> go t2 (\r2 -> k (B r1 r2)))(\r2 -> k (B r1 r2))

- We create a datatype with one constructor for each of these:

data K = I K1 K2

(This is not complete yet.)

- We introduce an interpretation function that turns a

K back into a (T b -> T b):

eval :: K -> (T b -> T b)

eval = (* TBD *)

- In the function

go, instead of taking a parameter of type (T b -> T b), we take a K. And when we actually use the continuation, we have to turn the K back to the function using eval:

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 K1

We also do this to the code fragments identified in the first step; these become:

(\r -> r)(\r1 -> go t2 K2)(\r2 -> eval k (B r1 r2))

- Now we complete the

eval function: For each constructor, we simply map it to the corresponding lambda from step 1:

eval :: K -> (T b -> T b)

eval I = (\r -> r)

eval K1 = (\r1 -> go t2 K2)

eval K2 = (\r2 -> eval k (B r1 r2))

- This doesn t quite work yet: We have variables on the right hand side that are not bound (

t2, r1, k). So let s add them to the constructors K1 and K2 as needed. This also changes the type K itself; it now needs to take type parameters.

This leads us to the following code:

data K a b

= I

K1 (T a) (K a b)

K2 (T b) (K a b)

map4 :: forall a b. (a -> b) -> T a -> T b

map4 f t = go t I

where

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 (K1 t2 k)

eval :: K a b -> (T b -> T b)

eval I = (\r -> r)

eval (K1 t2 k) = (\r1 -> go t2 (K2 r1 k))

eval (K2 r1 k) = (\r2 -> eval k (B r1 r2))

Not really cleaner or prettier, but everything is still tail-recursive, and we are now working with plain data.

We like lists

To clean it up a little bit, we can notice that the K data type really is just a list of values, where the values are either T a or T b. We do not need a custom data type for this! Instead of our K, we can just use the following, built from standard data types:

type K' a b = [Either (T a) (T b)]

Now I replace I with [], K1 t2 k with Left t2 : k and K2 r1 k with Right r1 : k. I also, very suggestively, rename go to down and eval to up:

map5 :: forall a b. (a -> b) -> T a -> T b

map5 f t = down t []

where

down :: T a -> K' a b -> T b

down (L x) k = up k (L (f x))

down (B t1 t2) k = down t1 (Left t2 : k)

up :: K' a b -> T b -> T b

up [] r = r

up (Left t2 : k) r1 = down t2 (Right r1 : k)

up (Right r1 : k) r2 = up k (B r1 r2)

At this point, the code suddenly makes more sense again. In fact, I can try to verbalize it:

As we traverse the tree, we have to remember for all parent nodes, whether there is still something Left to do when we come back to it (so we remember a T a), or if we are done with that (so we have a T b). This is the list K' a b.

We begin to go down the left of the tree (noting that the right siblings are still left to do), until we hit a leaf. We transform the leaf, and then go up.

If we go up and hit the root, we are done. Else, if we go up and there is something Left to do, we remember the subtree that we just processed (as that is already in the Right form), and go down the other subtree. But if we go up and there is nothing Left to do, we put the two subtrees together and continue going up.

Quite neat!

The imperative loop

At this point we could stop: the code is pretty, makes sense, and has the properties we want. But let s turn the dial a bit further and try to make it an imperative loop.

We know that if we have a single tail-recursive function, then that s equivalent to a loop, with the function s parameter turning into mutable variables. But we have two functions!

It turns out that if you have two functions a -> r and b -> r that have the same return type (which they necessarily have here, since we CPS-converted them further up), then those two functions are equivalent to a single function taking a or b , i.e. Either a b -> r. This really nothing else than the high-school level algebra rule of ra rb = ra + b.

So (after reordering the arguments of down to put T b first) we can rewrite the code as

map6 :: forall a b. (a -> b) -> T a -> T b

map6 f t = go (Left t) []

where

go :: Either (T a) (T b) -> K' a b -> T b

go (Left (L x)) k = go (Right (L (f x))) k

go (Left (B t1 t2)) k = go (Left t1) (Left t2 : k)

go (Right r) [] = r

go (Right r1) (Left t2 : k) = go (Left t2) (Right r1 : k)

go (Right r2) (Right r1 : k) = go (Right (B r1 r2)) k

Do you see the loop yet? If not, maybe it helps to compare it with the following equivalent imperative looking pseudo-code:

mapLoop :: forall a b. (a -> b) -> T a -> T b

mapLoop f t

var node = Left t;

var parents = [];

while (true)

switch (node)

Left (L x) -> node := Right (L (f x))

Left (B t1 t2) -> node := Left t1; parents.push(Left t2)

Right r1 ->

if (parents.len() == 0)

return r1;

else

switch (parents.pop())

Left t2 -> node := Left t2; parents.push(Right r1);

Right r2 -> node := Right (B r1 r2)

Conclusion

I find it enlightening to see how apparently very different approaches to a problem (recursive, lazy functions and imperative loops) are connected by a series of rather mechanical transformations. When refactoring code, it is helpful to see if one can conceptualize the refactoring as one of those mechanical steps (refinement, type equivalences, defunctionalization, cps conversion etc.)

If you liked this post, you might enjoy my talk The many faces of isOrderedTree, which I have presented at MuniHac 2019 and Haskell Love 2020.

(T b -> T b). These are:

(\r -> r)(\r1 -> go t2 (\r2 -> k (B r1 r2)))(\r2 -> k (B r1 r2))

data K = I K1 K2K back into a (T b -> T b):

eval :: K -> (T b -> T b)

eval = (* TBD *)go, instead of taking a parameter of type (T b -> T b), we take a K. And when we actually use the continuation, we have to turn the K back to the function using eval:

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 K1(\r -> r)(\r1 -> go t2 K2)(\r2 -> eval k (B r1 r2))

eval function: For each constructor, we simply map it to the corresponding lambda from step 1:

eval :: K -> (T b -> T b)

eval I = (\r -> r)

eval K1 = (\r1 -> go t2 K2)

eval K2 = (\r2 -> eval k (B r1 r2))t2, r1, k). So let s add them to the constructors K1 and K2 as needed. This also changes the type K itself; it now needs to take type parameters.data K a b

= I

K1 (T a) (K a b)

K2 (T b) (K a b)

map4 :: forall a b. (a -> b) -> T a -> T b

map4 f t = go t I

where

go :: T a -> K a b -> T b

go (L x) k = eval k (L (f x))

go (B t1 t2) k = go t1 (K1 t2 k)

eval :: K a b -> (T b -> T b)

eval I = (\r -> r)

eval (K1 t2 k) = (\r1 -> go t2 (K2 r1 k))

eval (K2 r1 k) = (\r2 -> eval k (B r1 r2))K data type really is just a list of values, where the values are either T a or T b. We do not need a custom data type for this! Instead of our K, we can just use the following, built from standard data types:

type K' a b = [Either (T a) (T b)]I with [], K1 t2 k with Left t2 : k and K2 r1 k with Right r1 : k. I also, very suggestively, rename go to down and eval to up:

map5 :: forall a b. (a -> b) -> T a -> T b

map5 f t = down t []

where

down :: T a -> K' a b -> T b

down (L x) k = up k (L (f x))

down (B t1 t2) k = down t1 (Left t2 : k)

up :: K' a b -> T b -> T b

up [] r = r

up (Left t2 : k) r1 = down t2 (Right r1 : k)

up (Right r1 : k) r2 = up k (B r1 r2)As we traverse the tree, we have to remember for all parent nodes, whether there is still somethingQuite neat!Leftto do when we come back to it (so we remember aT a), or if we are done with that (so we have aT b). This is the listK' a b. We begin to godownthe left of the tree (noting that the right siblings are still left to do), until we hit a leaf. We transform the leaf, and then goup. If we goupand hit the root, we are done. Else, if we goupand there is somethingLeftto do, we remember the subtree that we just processed (as that is already in theRightform), and godownthe other subtree. But if we goupand there is nothingLeftto do, we put the two subtrees together and continue going up.

The imperative loop

At this point we could stop: the code is pretty, makes sense, and has the properties we want. But let s turn the dial a bit further and try to make it an imperative loop.

We know that if we have a single tail-recursive function, then that s equivalent to a loop, with the function s parameter turning into mutable variables. But we have two functions!

It turns out that if you have two functions a -> r and b -> r that have the same return type (which they necessarily have here, since we CPS-converted them further up), then those two functions are equivalent to a single function taking a or b , i.e. Either a b -> r. This really nothing else than the high-school level algebra rule of ra rb = ra + b.

So (after reordering the arguments of down to put T b first) we can rewrite the code as

map6 :: forall a b. (a -> b) -> T a -> T b

map6 f t = go (Left t) []

where

go :: Either (T a) (T b) -> K' a b -> T b

go (Left (L x)) k = go (Right (L (f x))) k

go (Left (B t1 t2)) k = go (Left t1) (Left t2 : k)

go (Right r) [] = r

go (Right r1) (Left t2 : k) = go (Left t2) (Right r1 : k)

go (Right r2) (Right r1 : k) = go (Right (B r1 r2)) k

Do you see the loop yet? If not, maybe it helps to compare it with the following equivalent imperative looking pseudo-code:

mapLoop :: forall a b. (a -> b) -> T a -> T b

mapLoop f t

var node = Left t;

var parents = [];

while (true)

switch (node)

Left (L x) -> node := Right (L (f x))

Left (B t1 t2) -> node := Left t1; parents.push(Left t2)

Right r1 ->

if (parents.len() == 0)

return r1;

else

switch (parents.pop())

Left t2 -> node := Left t2; parents.push(Right r1);

Right r2 -> node := Right (B r1 r2)

Conclusion

I find it enlightening to see how apparently very different approaches to a problem (recursive, lazy functions and imperative loops) are connected by a series of rather mechanical transformations. When refactoring code, it is helpful to see if one can conceptualize the refactoring as one of those mechanical steps (refinement, type equivalences, defunctionalization, cps conversion etc.)

If you liked this post, you might enjoy my talk The many faces of isOrderedTree, which I have presented at MuniHac 2019 and Haskell Love 2020.

map6 :: forall a b. (a -> b) -> T a -> T b

map6 f t = go (Left t) []

where

go :: Either (T a) (T b) -> K' a b -> T b

go (Left (L x)) k = go (Right (L (f x))) k

go (Left (B t1 t2)) k = go (Left t1) (Left t2 : k)

go (Right r) [] = r

go (Right r1) (Left t2 : k) = go (Left t2) (Right r1 : k)

go (Right r2) (Right r1 : k) = go (Right (B r1 r2)) kmapLoop :: forall a b. (a -> b) -> T a -> T b

mapLoop f t

var node = Left t;

var parents = [];

while (true)

switch (node)

Left (L x) -> node := Right (L (f x))

Left (B t1 t2) -> node := Left t1; parents.push(Left t2)

Right r1 ->

if (parents.len() == 0)

return r1;

else

switch (parents.pop())

Left t2 -> node := Left t2; parents.push(Right r1);

Right r2 -> node := Right (B r1 r2)

isOrderedTree, which I have presented at MuniHac 2019 and Haskell Love 2020.

Currently, the Government procures rice in vast quantities and the farmers are assured at least some basic income, in the states of Punjab and Haryana

Currently, the Government procures rice in vast quantities and the farmers are assured at least some basic income, in the states of Punjab and Haryana

PTI News, Nov 27, 2020

PTI News, Nov 27, 2020

long time no blog post. & the

long time no blog post. & the

Like

Like  Statutory warning It s a long read.

I start by sharing I regret, I did not hold onto the

Statutory warning It s a long read.

I start by sharing I regret, I did not hold onto the Another interesting story which I had shared was a bit about

Another interesting story which I had shared was a bit about  America was

America was  One another interesting point I came to know is that Canada is home to bicycling

One another interesting point I came to know is that Canada is home to bicycling

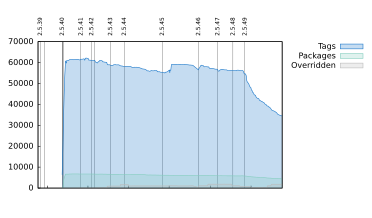

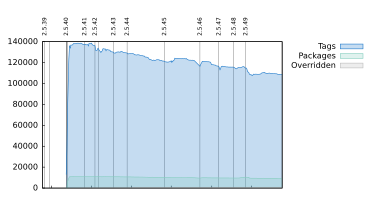

These are the last hours when we can update packages and they migrate to testing after 10 days right before the full freeze on 5 February.

The latest Wireshark upstream version, 2.2.4 has been released on Monday and it is waiting patiently to be part of next Debian stable.

I have just tested the fix for Kodi s bug preventing playing DVD-s and today it will be fixed in unstable as well.

If you have packages which could be updated to make Stretch even better you can still do it today, but don t wait too long!

Thanks to everyone working on Debian! Stretch will be awesome!

These are the last hours when we can update packages and they migrate to testing after 10 days right before the full freeze on 5 February.

The latest Wireshark upstream version, 2.2.4 has been released on Monday and it is waiting patiently to be part of next Debian stable.

I have just tested the fix for Kodi s bug preventing playing DVD-s and today it will be fixed in unstable as well.

If you have packages which could be updated to make Stretch even better you can still do it today, but don t wait too long!

Thanks to everyone working on Debian! Stretch will be awesome!

I pledge that before sending each email to the debian-devel mailing list I move forward at least one actionable bug in my packages.

I pledge that before sending each email to the debian-devel mailing list I move forward at least one actionable bug in my packages.

I have just finished level one, fixing all RC bugs in

I have just finished level one, fixing all RC bugs in  Hardening all executables by making them position independent by default is basically ready with a few packages to fix (

Hardening all executables by making them position independent by default is basically ready with a few packages to fix (

Shipping Position Independent Executables and using read-only Global Offset Table was already possible for packages but needed package maintainers to opt-in for each package (see

Shipping Position Independent Executables and using read-only Global Offset Table was already possible for packages but needed package maintainers to opt-in for each package (see